AI Bias is Real

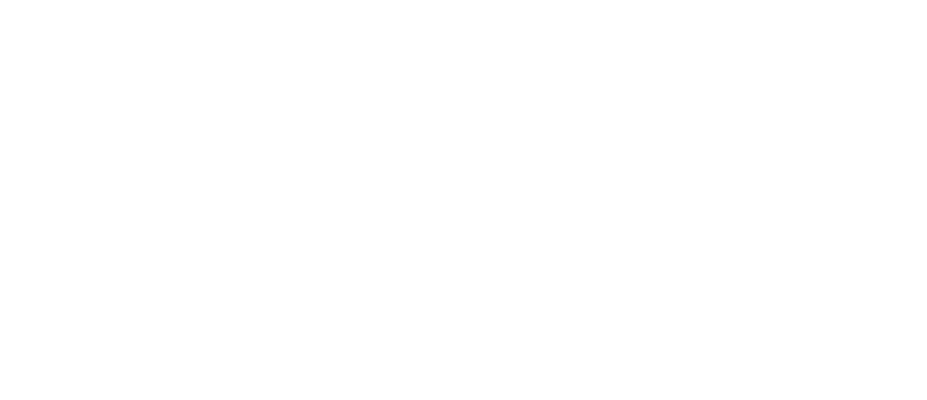

Recently, I asked AI (Perplexity) to give me a prompt for a graphic of NCAA coaches and athletic directors “treating the transfer portal like a Black Friday sale.” I then asked it to generate the image itself rather than using one of my other go-to image generating AI tools.

I said nothing about gender or any other characteristics of the people in the proposed image.

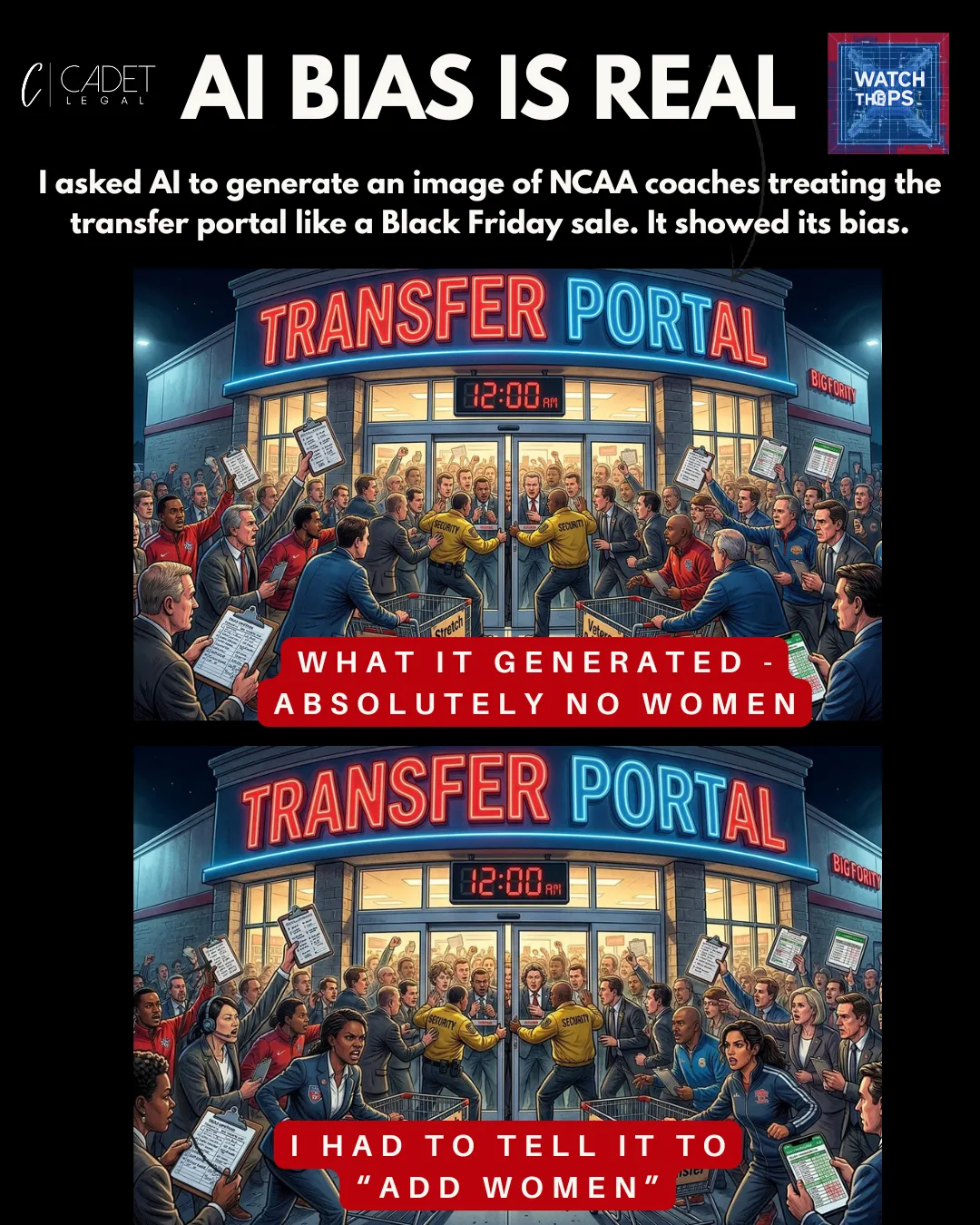

Look at the image above. The tool rendered only male sports professionals until I explicitly prompted it to “add women.” That’s a small but telling example of how AI can encode and surface bias even in “harmless” creative tasks, and how easily that can bleed into your brand, messaging, and culture.

AI isn’t “good” or “bad” — it’s an amplifier. It scales whatever is already in the source data, processes, and blind spots. And that’s where the legal risk lives.

Some AI use cases are simply higher risk than others. Hiring is a great example. The more you let AI sort, screen, and rank candidates, the more you risk importing biased patterns from historical data into decisions that directly affect people’s livelihoods and implicate anti‑discrimination laws. If your training data reflects past bias against protected classes, your “efficient” hiring pipeline can quietly reproduce that bias at scale.

At Cadet Legal, we’re bullish on AI but careful about where and how we use it. We’re intentionally starting with lower‑risk operational use cases like internal CRM, project management, and research workflows and marketing support while keeping the humans on my team firmly in the loop. Even there, we see the bias problem up close as illustrated by the image below.

Use AI. Learn it. Leverage it. But don’t blindly trust it. Human intelligence — especially legal, ethical, and operational judgment — is still your best protection against AI risk.

If you have questions about using AI in your business operations, talk to a firm that believes in the efficiency upsides afforded by this tech, but that also appreciates the legal and business risk.